AmpereComputing were kind enough to send us at our Cloudbase Solutions office the new version of their top-tier server product, which incorporates their latest Ampere ALTRA ARM64 Processor. The server version has a beefy dual-socket AMPERE ALTRA setup, with 24 NVME slots and up to 8 TB installable RAM, spread over 8 channels.

Let the unboxing begin!

First, we can see the beautiful dual-socket setup, each ALTRA CPU being nicely placed between the multiple fans in a row and the back end. The server height format is 2U, which was required for the dual-socket and multi-NVME placement.

The AMPERE ALTRA CPU in the box has a whopping 80 cores which boast 3.0 Ghz clock speed in this variant (Altra AC-108021002P), built on 7nm architecture. This server setup can take advantage of two such CPUs, with 160 cores spread over 2 NUMA nodes. Also, the maximum amount of RAM can go to 8TB (4TB / socket), if using 32 DIMMs of 256GB each. In our setup, we have 2 x 32GB ECC in dual-channel setup for each NUMA node, totaling 128GB.

|

1 2 3 4 5 6 7 8 9 10 |

$> numactl --hardware available: 2 nodes (0-1) node 0 cpus: 0..79 node 0 size: 64931 MB node 1 cpus: 80..159 node 1 size: 64325 MB node distances: node 0 1 0: 10 20 1: 20 10 |

From the networking side perspective, we have a BMC network port, 2 x 1Gb Intel i350 and an Open Compute Rack port (OCP 3.0) for one PCIe Gen4 network card, in our case a Broadcom BCM957504-N425G, that supports 100Gbps over the 4 ports (25Gbps/each). Furthermore, if you are a Tensorflow or AI workload aficionado, there is plenty of room for 3x Double Width GPU or 8x Single Width GPU (example: 3x Nvidia A100 or 8x Nvidia T4).

The storage setup comes with 24 NVME PCIe ports, where we have one 1TB SSD, plus another M2 NVME SSD that has been placed inside the server, southwards to the first socket.

After the back cover has been put back, the server placed in the rack and connected to a redundant power source (the two power sources have 2000 Watts each), let’s go and power on the system!

Before the OS installation, let’s make a small detour and check out the IPMI web interface, which is based on the MEGARAC SP-X WEB UI, a very fast UI. The board is an Ast2600, firmware version 0.40.1. The H5Viewer console is snappy, and a complete power cycle takes approximately 2 minutes (from a powered off system state to the Operating System bootloader start).

As operating system of choice, we have chosen the latest developer build of Windows 11 ARM64, available for download as VHDX at https://www.microsoft.com/en-us/software-download/windowsinsiderpreviewARM64 (Microsoft account required).

This version of Windows 11 has been installed as a trial only for demo purposes. At this moment, Windows 11 ARM64 build 25201 was available for download. The installation procedure is not yet so straightforward, as there is no ISO currently provided by Microsoft. The following steps were required in order to install the above Windows version on the Altra Server:

- Download the Windows 11 ARM64 Build 225201 VHDX from https://www.microsoft.com/en-us/software-download/windowsinsiderpreviewARM64

- Use qemu-img to convert the VHDX to a RAW file and copy the RAW file to USB stick (USB Stick 1)

- qemu-img convert -O raw Windows11_InsiderPreview_Client_ARM64_en-us_25201.VHDX Windows11_InsiderPreview_Client_ARM64_en-us_25201.raw

- Download an Ubuntu 22.04 ARM64 Desktop and burn it to an USB stick (USB Stick 2)

- Power on the server with the two USB sticks attached

- Boot into the Ubuntu 22.04 Live from USB Stick 2

- Use “dd” to copy the RAW file directly to NVME device of choice

- dd if=/mnt/Windows11_InsiderPreview_Client_ARM64_en-us_25201.raw of=/dev/nvme0n1

- Reboot the server

After the server has been rebooted and completing the Out Of The Box Experience (OOBE) steps, Windows 11 ARM64 is ready to be used.

As you can see, Windows properly recognises the CPU and NUMA architecture, with 2 NUMA nodes with 80 cores each. We tried to see if a simple tool like cpuconsume will properly work while running on a certain NUMA Node, using the command “start /node <numa node> /affinity” and we observed that the CPU load was also spread on the other node, so we decided to investigate this initially weird behaviour.

In Windows, there are two concepts that apply from the perspective of NUMA topology: Processor Groups and NUMA Support, both being implemented without any caveats for processors with a maximum of 64 cores. Here comes the interesting part, as in our case, one processor has 80 cores, which is by default split in 3 Processor Groups in the default Windows installation. The first group aggregates 64 cores from NUMA Node 0, group 3 aggregates 64 cores from NUMA Node 1, while Group 2 aggregates the remaining 16 + 16 cores from NUMA Node 0 and NUMA Node 1, for a total of 32 cores. If cpuconsume.exe is started with this command: “cmd /c start /node 0 /affinity 0xffffffffff cpuconsume.exe -cpu-time 10000”, there is 50% chance that it is started on Processor Group 0 or Processor Group 1. Also, changing the affinity from Task Manager, while it was working to change the affinity in the same group, it did not work to change it to processors from multiple Processor Groups.

Fortunately, AMPERE ALTRA’s firmware configuration offers a great trade-off solution, which makes running Windows application simpler on NUMA Nodes – it has a configurable topology: Monolithic, Hemisphere and Quadrant.

The Monolithic configuration is the default one, where there is 1:1 NUMA Node to Socket (CPU). In this case, there are 3 Processor Groups on Windows, the ones explained above. Setting the configuration to “Quadrant” did not seem to produce any changes on how the Windows Kernel sees it vs the Monolithic one. The remaining and best option was “Hemisphere”, where the cores are split into 4 NUMA Nodes, and Windows Kernel maps those NUMA Nodes to 4 Processor Groups, with 40 cores each. In this case, Windows applications can have the affinity correctly set using the “start /node <node number> /affinity <cpu mask>” command.

In the above screenshot, you can see that consume.exe has been instantiated on each NUMA Node and configured to use only 39 cores (mask 0xfffffffffe).

On the Windows Driver support, the Broadcom OCP3 card is not currently supported on this Windows version. There is no support either for the Intel I350 network cards. Fortunately, all the Mellanox Series are supported and we could add one such card to the system from the Ampere EMAG series we had received before (see https://cloudbase.it/cloudbase-init-on-windows-arm64/). For the management network, we used a classic approach – an USB 2.0 to 100Mb Ethernet adapter, that suited perfectly our installation use case.

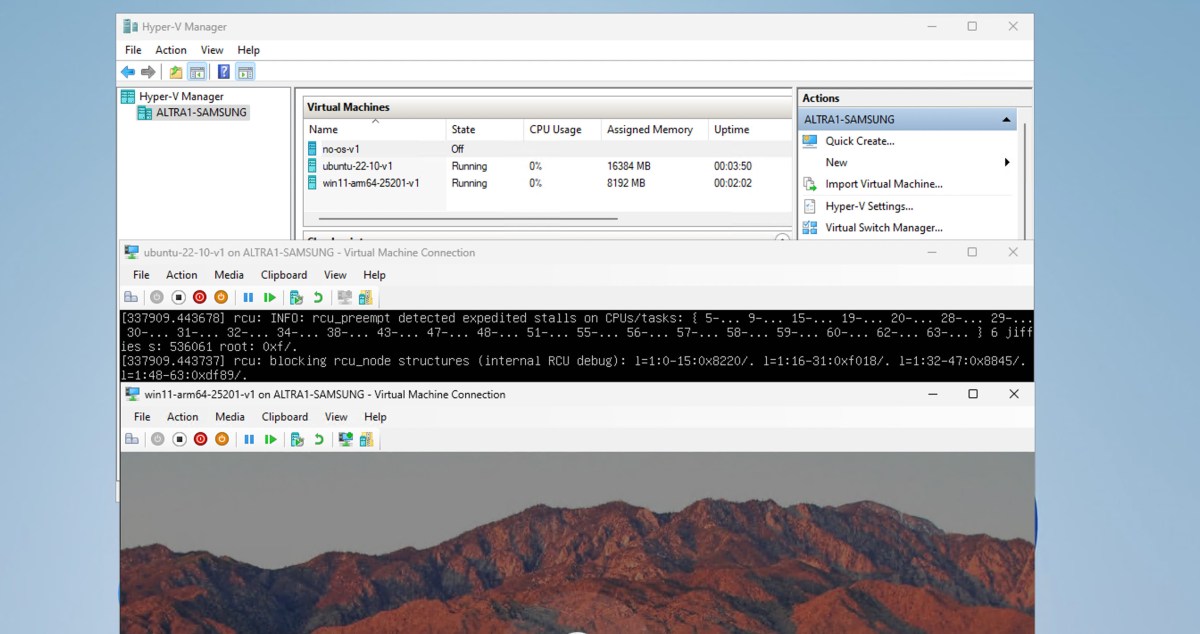

From the virtualization perspective, we could easily turn on Hyper-V and try out booting a Windows and a Linux Hyper-V Virtual Machines. Windows works perfectly out of the box as a Virtual Machine, while Linux VMs require kernel >=5.15.rc1, which includes this patch that adds Hyper-V ARM64 boot support. For example, Ubuntu 22.04 or higher will boot on Hyper-V. Some issues were noticed on the virtualization part, nested virtualization is currently not supported (failure to start the Virtual Machine if processor virtualization extensions are enabled) and the Linux Kernel needs to have the kernel stall issues fixed, as seen in the screenshot below.

The performance of the hardware is well managed by the latest Windows version, the experience was flawless during the short period. We will come back with an in-depth review of performance and stability, AmpereComputing did a great job with their latest server products, kudos to them!